Tech Talk

PCIe vs NVMe: What’s the difference?

Last updated 8 May 2024

Storage technology has progressed significantly over the last five years and SSD technology is at the forefront of these advancements. The latest smartphones, tablets and computers are now demanding more, higher-capacity flash storage to satisfy demand and datacentres are retaining more information as many turn to cloud backup services.

The explosion of SSD technology over recent years has forced manufacturers to up their game and offer something different other than price point. Read/write speeds are all similar especially when a SATA interface is used. But what if you need something quicker? The demand for performance is also undoubtedly increasing as datacentres fuel the need for data to be accessed and processed at much quicker rates.

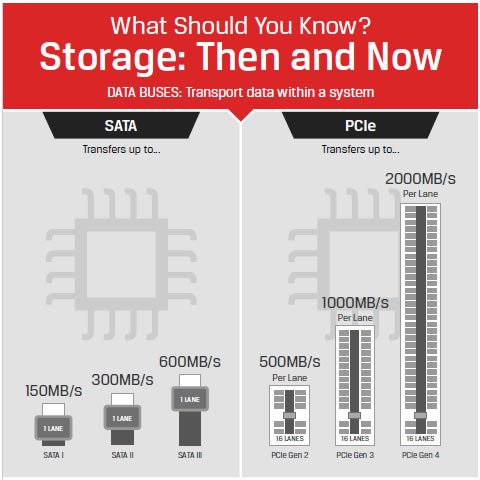

So how do you get quicker speeds than 500Mb/s? The answer lies in the interface. SATA 3 has long been the bastion of SSD interface technology and will be for some time yet. However, more manufacturers are turning their attention to the next generation of interface tech, namely PCIe. Read our blog on different interfaces and how they compare.

There are also different performance characteristics depending on what kind of module and where it is used i.e. personal, enterprise, server or industrial usage. There is a world of differences between consumer and industrial-grade storage and you can read about the differences here.

What is PCIe?

Peripheral Component Interconnect Express, often seen abbreviated as PCIe or PCI-E, is a standard type of connection for internal devices in a computer. PCIe has been around for several years but is seeing increasing adoption owing to its speed.

Because of SATA 3.0 600MB/s ceiling, PCIe is starting to supersede SATA as the latest high-bandwidth interface. A PCIe connection consists of one or more data-transmission lanes connected serially. Each lane consists of two pairs of wires, one for receiving and one for transmitting. You can have one, four, eight or sixteen lanes in a single PCIe slot, denoted as x1, x4, x8, or x16.

PCIe technology enables interface speeds of up to 1GB/s per client lane (PCIe 3.0), versus today’s SATA technology speeds of up to 0.6GB/s (SATA 3.0). More lanes from SATA require more SATA devices, but PCIe bandwidth can be scaled up to 16 lanes on a single device.

While computers may contain a mix of various types of expansion slots, PCIe is considered the standard internal interface. Many computer motherboards today are manufactured only with PCIe slots, so progression to PCIe is inevitable.

NVMe – what does it stand for?

Non-Volatile Memory (Express) or NVMe is a communication transfer protocol (or language) developed especially for SSDs by a consortium of vendors including Samsung, SanDisk, Dell and Seagate. All-in-all, 90 IT industry partners came together to create a standard driver that they could all adopt and support. Like SATA, NVMe is designed to take advantage of the unique properties of pipeline-rich, random access memory-based storage. It also reflects improvements in methods to lower data latency since SATA was introduced. So, by not supporting legacy protocols, vendors can focus on taking full advantage of NVMe-based storage. NVMe can also access more data in one CPU cycle (compared to a cycle for each access, like SATA) so is instantly more attractive to use.

Not only does NVMe deliver better performance, but it is also highly compatible. There is now only one software interface standard for manufacturers to adhere to, so they don’t have to write their own. Vendors and IT professionals responsible for implementation no longer need to vet vendors based on their compatibility with a particular operating system instead they can look at the specific capabilities and cost of the card to determine which is best for their environment, so a win-win for the end users. Ideal use cases for NVMe are relational databases, AI and high-performance computing.

Putting together a storage device of NVMe and PCIe seems to make perfect sense and many SSD manufacturers are taking this route. But just how good could this be?

Facts, figures and features

HDDs or spinning disks are still used to a high extent in datacentres as they are seen as reliable, cheap to replace and don’t wear down. Their performance is limited, however (this tech is 40 years old!). HDDs and SSDs have only one command queue and can send 32 commands per queue. NVMe has 64,000 command queues and can send 64,000 commands per queue. So, it is clear that utilising NVMe and PCIe together would make perfect sense for a datacentre where so much information is being processed every second. PCIe communicates directly with the system CPU as opposed to a SATA controller, so in essence, it’s cutting out the middle-man to access information quicker.

So what solutions are available? Combining NVMe and PCIe is now on the rise and technology leader Kingston can offer the DCP1000, dubbed the world’s fastest SSD with read/write speeds of a whopping 6800/6000 MB/s – over ten times faster than SATA SSDs. Additional features common across SSDs include power failure protection enabling the device to shut down safely in the event of a power outage with no loss of data and a reduced risk of data corruption, AES 256-bit encryption and extensive warranties – a must for data centres. Read more about the different levels of encryption here

SMART tools will support IT enablers to monitor the health of the PCIe SSDs; reliability, usage, life remaining, wear levelling and temperature can all be monitored so any early problems can be identified and acted on with minimal downtime.

The future certainly looks bright for NVMe PCIe tech especially when 3D NAND is making its way into commercial and industrial storage. Knowing the fast pace of our industry we can almost certainly predict that capacities and speeds will only increase further.