Tech Talk

What is HBM (High Bandwidth Memory)?

Last updated 25 February 2026

Modern-day computing is increasingly demanding. Today’s applications - from AI model training and real-time analytics to advanced graphics and simulation - are more complex and data-intensive than ever before. As workloads grow, performance is no longer limited by compute alone, but by how quickly data can be accessed and moved.

To meet these demands, new technologies and innovations continue to emerge. Memory is no exception. Even with the introduction of DDR5, traditional memory architectures can struggle to provide sufficient bandwidth, creating bottlenecks that limit the performance of processors, GPUs, and accelerators.

This challenge led to the development of High Bandwidth Memory (HBM).

What is HBM?

High Bandwidth Memory (HBM) is an emerging type of computer memory that is designed to provide both high-bandwidth and low power consumption. Typically, it will be suited and used in high-performance computing applications where that data speed is required.

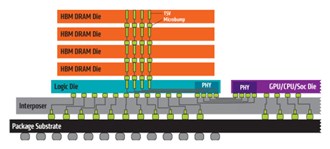

HBM uses a 3D stacking technology. This enables various layers of chips to be stacked on top of each other, by vertical channels called TSVs (through-silicon vias). This allows for a larger number of memory chips to be packed into a smaller space, reducing the distance that data needs to travel between the memory and processor.

HBM Specifications

| HBM | HBM2/HBM2E | HBM3/HBM3E | |

| Max Pin Transfer Rate | 1 Gbps | 3.2 Gbps | Up to 9.2Gbps |

| Max Capacity | 4GB | Up to 24GB | Up to 64GB |

| Max Bandwidth | 128 GBps | 410 GBps | 819 GBps+ |

What are its key advantages?

Here are the many advantages HBM holds over traditional technologies:-

- More bandwidth: As the name suggests, bandwidth is the headline benefit. HBM delivers significantly higher data throughput than DDR-based memory, enabling faster processing and improved performance for complex workloads such as AI training, inference, and scientific computing.

- Lower power consumption: HBM is more power-efficient due to its shorter data paths and lower operating voltages. This reduces overall system energy consumption, an increasingly important factor for data centres focused on efficiency and sustainability.

- Improved Thermal Management: Lower power draw translates directly into reduced heat generation. Combined with advanced packaging techniques, HBM helps improve system reliability and thermal stability under heavy load.

- Higher capacity: With large per-stack capacities, HBM enables systems to process and retain vast datasets close to the processor. This is critical for AI, machine learning, and large-scale analytics workloads.

- Smaller footprint: Thanks to 3D stacking, HBM delivers high performance in a compact form factor. This makes it ideal for space-constrained environments such as GPUs, accelerators, and high-density compute platforms.

High Bandwidth Memory right now

HBM is no longer an emerging technology, it is a foundational component of modern AI and HPC systems. Many leading semi-conductor vendors now rely on HBM to power next-generation accelerators and GPUs.

One of the most notable players is Micron, a key vendor partner of Simms. Micron continues to advance HBM technology, with HBM2E widely deployed today and HBM3 / HBM3E enabling the next wave of AI performance.

HBM has become essential for powering large language models, GPU acceleration, and data-intensive compute environments - cementing its role as a cornerstone of modern high-performance systems.