Tech Talk

Choosing the right SSD for the Cloud

Last updated 17 March 2022

What is the right type of SSD for the cloud?

In this article, we cover the differences, options, and considerations for selecting SSDs for cloud environments.

A common story

We often hear of businesses selecting desktop or notebook SSDs for their servers. When we ask why this is usually due to the lure of their low cost per GB comparison with the equivalent server grade devices and is followed by, we’ve been using them for ages with no issue, when they fail we simply replace them, they offer the same capacity and form factor so why pay more. All valid points, however, when we ask why are you looking at options: the answer always relates to an issue.

That’s because unfortunately, this approach in our experience invariably ends in causing more problems than the initial cost saving is worth. With spiralling operational costs, poor quality of service, sudden obsolescence, and data loss/corruption amongst the four biggest reasons cited for having to change.

The winners

Savvy datacentre operators, cloud services providers and internet service providers that have witnessed or experienced these pain points are opting now to take a more considered approach. One that factors in workload, total costs of ownership and environment to ensure they are selecting SSD devices that offer the best value for them and their customers.

They have worked out not only is it worth taking the time to fully assess SSD choice against a wider list of criteria, but more importantly to convey what that means to their customers as a way of making their offering more compelling and increasing revenue streams.

Getting started

With so many options, available and new products being released at a faster rate than ever it can be hard to know where to start. Our handy guide below covers some of the important information you need to consider for your cloud deployment.

Not all SSDs are equal

Solid-state drives are available in various form factors, interfaces, and densities. They incorporate different NAND technology, controllers and features that are selected specifically to suit their intended applications and workloads. It’s important to understand upfront how these options impact performance, cost and support before selecting your device. Failure to do so results in lost data, system downtime and wasted resources.

Categories

Often, SSDs are categorised

- ‘Consumer’ for home computing

- ‘Client’ for workplace computing

- ‘Datacentre’, ‘Enterprise’ or ‘Cloud’ for server computing

This is done so that it can be easier to understand which devices will offer the best value and are most suitable based on the intended environment and expected workload. Making it faster for you to start evaluating your options ensuring that you are not over or underpaying for a device that isn’t needed.

Datacentre, enterprise, and cloud SSDs are offered at a premium in comparison with desktop and notebook SSD’s because they offer higher and more reliable performance and include unique features that are critical for storage systems.

Endurance

This ultimately is a very important differentiator between consumer drives and server drives. Consumer and client drives can operate for a long time, but ultimately, not in a heavy load environment like that of the cloud and datacentre.

Stick a consumer-grade SSD in a mixed application server and you’re looking at burning it out and replacing it within months, rather than years. Whereas with server SSDs, they have much better Drive Writers Per Day (DWPD) – which is a common way to measure flash endurance – to handle such loads and applications that come with cloud, related to artificial intelligence and machine learning. Different SSDs are made from different types of NAND. The most fundamental difference among NAND types is the number of bits stored in each cell (one, two, then three and now four), which ultimately affects endurance.

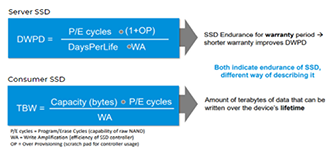

The way in which endurance is messaged between a server SSD and a consumer SSD could be described as DWPD (as discussed above) for server SSD vs TBW for consumer SSD. So, for server SSD and SSD endurance (as illustrated below by our vendor partner Micron), the endurance is messaged for the warranty period, with a shorter warranty period improving DWPD. Whereas for consumer SSD, a better way, but still a way of measuring endurance is the number of terabytes of data that can be written over the device’s lifetime.

What’s more, as you can see from the graph, the blue bar growth shows SSDs shipped with less than 1 DWPD as per Micron since. The lesser Drive Writers Per Day, the better, measuring how many times you could overwrite the drive’s entire size each day of its life.

This is Micron data again, and they are showing they are shipping more highly endurable server SSD every year and into the future as datacentre and cloud demand clearly grows.

Latency

Firstly, what is latency?

Well, latency means delay. Sending information from one point to another – A to B stuff. Essentially the time it takes the information to appear at point B after departing point A.

Latency is up there as the most important aspect when it comes to SSD delivering enhanced performance. Server SSDs deliver lower latency compared to alternative drives through optimised read-intensive and mixed-use workload characteristics for things like media streaming, online transaction processing, block and object stores and database acceleration. Things like fast random read and write performance can deliver things like 99.9% latency which will ultimately boost a server set-up in a cloud environment.

Capacity

Datacentre SSDs capacity can take an HDD head-on compared to say that of a consumer or client drive. Why? They’re built with super-high capacity going up to sizes of 15.36TB in some cases and 32 NVMe spaces. What’s more, they come in incredibly flexible capacities to suit your needs with high-capacity normally starting around 1.9TB right up to the 15TB+ mentioned above, with simplified firmware management and more parallel sessions for single storage devices, making these high-capacity SSDs ideal for server, cloud and datacentre environments.

Power & Data Loss

Another concept in SSD is Power Loss Protection (PLP). And it’s important. It’s important that when choosing an SSD, you choose one with such protection. The concept safeguards the SSD during and after a power loss event, flushing data in-flight to the persistent or non-volatile flash memory while maintaining the integrity of the SSD’s mapping table to that the drive is useable and easily recognisable again when the system reboots.

A well-designed SSD will have a hardware-based design with hold-up power capacitors on board and/or firmware PLP implementation, so look out for these. We offer such drives from Kingston, Micron and Intel that cover this and all of the above technical characterises, making them perfect for your cloud and server set-up.

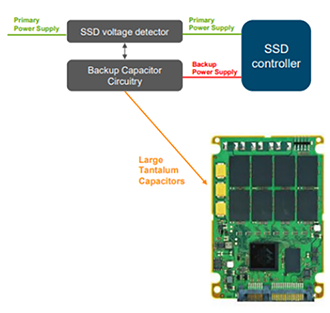

As well as this, the latest server SSDs come with Power Fail Circuitry (PFC) compared to that of client and consumer drivers.

Having PFC in your solid-state-drive solution will increase reliability for application-dependent workloads, with an example from our vendor Micron as to how this works on the right.

In having Power Fail Circuitry compared to not, like client and consumer SSD, data in flight will be written flash when (power) is lost.

Total Cost of Ownership (TCO)

This means more than the initial purchase costs. When selecting an SSD for cloud workloads, the better metric to use is this, as it is a more comprehensive measure. This includes not only capital expenditure (CAPEX) for that initial buy, but the operational expenditure (OPEX) over the life of a system. With OPEX, this includes the cost of electricity and cooling, labour for the management of the systems and support in replacing hardware when failures occur.

So why is TCO important? Well, it is easy to compare initial purchase costs, but you must consider TCO to enable you to include maintenance costs in the equation to better see which solution is right for you.

Another question around TCO is how you calculate this in terms of server storage. This isn’t done by seeking the biggest endurance number, best warranty, or lowest costs. It is achieved by finding the solution that meets those needs in relation to value and then selecting the most appropriate solutions based on a holistic view that incorporates OPEX costs.

Some aren’t sure where to start with TCO. What we suggest is the most important step is to understand your application workload, determining how the drives will be used and how they will last in the intended environment.

Conclusion

In total conclusion, it’s always a matter of trade-offs when choosing SSDs. The performance, the cost and the reliability trade-off. But for cloud, cutting corners may be OK for some smaller niche workloads, but in general, mixed workloads from a virtualised situation simply has to be a server SSD to succeed and benefit the business in the long term.

Speak to the Simms server team today to discuss the server SSD options for you.